Multimodal Voice Modeling Using Acoustic and High-Speed Video Signals with Machine Learning

Project Overview

Built from the bench up, this program begins with physical vocal-fold models and controlled airflow, collecting direct intraglottal and subglottal pressures, acoustic recordings, and high-speed video under reproducible conditions. On top of these grounded measurements, we have developed physics-informed models—contact mechanics for collision pressure and finite-element inversion for tissue properties—to generate interpretable, pathology-relevant features such as spatiotemporal opening and closure patterns, symmetry and energy measures, and cumulative collision-pressure "dose." These features are then fed into machine learning models for classification, enabling differentiation of common lesions (for example, nodules, polyps, and posterior glottal insufficiency). The result is a coherent chain—physical modeling → multimodal measurement → physics-informed features → machine-learning classification—that supports diagnosis, therapy planning, and surgical decision-making.

Related Publications

-

Collision pressure and dissipated power dose in a self-oscillating silicone vocal fold model with a posterior glottal opening

Journal of Speech, Language, and Hearing Research, 65(8), 2829-2845

-

Effect of nodule size and stiffness on phonation threshold and collision pressures in a synthetic hemilaryngeal vocal fold model

The Journal of the Acoustical Society of America, 153(1), 654-664

-

Vocal fold dynamics in a synthetic self-oscillating model: Intraglottal aerodynamic pressure and energy

The Journal of the Acoustical Society of America, 150(2), 1332-1345

-

Vocal fold dynamics in a synthetic self-oscillating model: Contact pressure and dissipated-energy dose

The Journal of the Acoustical Society of America, 150(1), 478-489

-

Intraglottal aerodynamic pressure and energy transfer in a self-oscillating synthetic model of the vocal folds

medRxiv, 2020.11.20.20235911

-

Estimating vocal fold contact pressure from raw laryngeal high-speed videoendoscopy using a Hertz contact model

Applied Sciences, 9(11), 2384

-

Bayesian inference of vocal fold material properties from glottal area waveforms using a 2D finite element model

Applied Sciences, 9(13), 2735

Temporal intraglottal pressure variations at four positions in a vocal fold model oscillating at 150 Hz, shown with synchronized high-speed imaging.

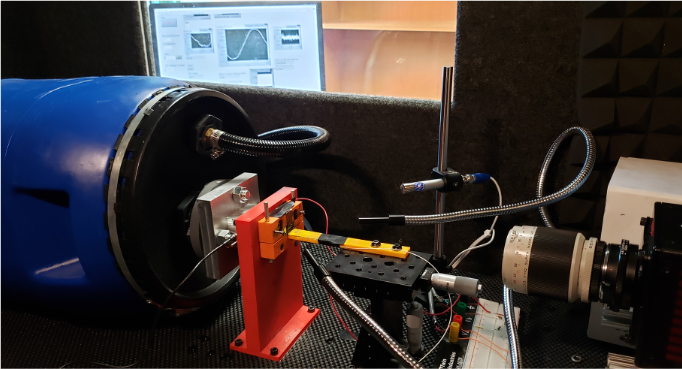

Designed setup for extracting voice signals and high-speed vocal fold videos.

Ambulatory voice monitoring using neck surface acceleration.